Blogs.

Introducing mRAG: How EverOS Retrieves What Actually Matters

This post explains what mRAG is, why it exists, and what it means for you and your agents.

mRAG

multimodal

multimodal retrieval

RAG

Introducing Self-Evolving Agent Memory: How EverOS Helps Your AI Agents Learn from Experience

This post explains what Agent Memory is, how it works, and what it means for building agents that actually improve over time.

Self-Evolving Agent Memory

Agent Memory

Self-Evolving

Agent Skills

Agent Cases

Breaking the 100M Token Limit: MSA Architecture Achieves Efficient End-to-End Long-Term Memory for LLMs

We present Memory Sparse Attention (MSA): an end-to-end trainable, scalable sparse latent-state memory framework.

long term memory

RAG

context

ai agent

OpenClaw

sparse attention

transformers

LLM

KV cache

EverOS: SOTA Results Across Four Memory Benchmarks and What It Means for LLM Agents

We have released our latest research on EverOS, now available on arXiv! Large Language Models are quickly evolving from “single turn chatbots” into long-term interactive agents. But as soon as an agent is expected to stay coherent across weeks of conversations, it runs into a practical ceiling: a limited context window and fragmented memory. Even with retrieval, many systems still behave like they are pulling isolated snippets—often missing conflicts, failing to update user state, or giving inconsistent guidance over time. In our latest research, we introduce EverOS, a self-organizing memory operating system that treats memory not as a flat store, but as a lifecycle—inspired by biological “engram” principles—so agents can continuously transform raw interactions into structured, evolving knowledge.

EverOS

long term memory

RAG

context

LoCoMo

LongMemEval

PersonaMem

A Unified Evaluation Framework for AI Memory Systems

Using a unified, production-grade evaluation framework, we benchmarked leading memory systems — EverOS, Mem0, MemOS, Zep, and MemU — under the same datasets, metrics, and answer model. This framework provides a fair, transparent, and reproducible standard for evaluating real-world memory performance in the Agentic Era. And EverOS delivered best-in-class results across LoCoMo and LongMemEval.

AI Memory

Evaluation Framework

EverOS

Mem0

MemU

ZEP

MemOS

LoCoMo

LongMemEval

EverOS Hits SOTA Performance on LoCoMo

EverOS is an intelligent memory operating system designed to give AI the ability not just to remember, but to understand, reason, and evolve. On the LoCoMo benchmark, our approach built upon EverOS achieved a 92.3% reasoning accuracy (evaluated by LLM-Judge), outperforming comparable methods in our internal evaluation.

SOTA

LoCoMo

long-term memory

Personal Knowledge Base AI: Enhancing Productivity with AI Knowledge Management Tools

This article will explore the concept of personal knowledge base AI, its core features, and how it can significantly improve knowledge management practices.

personal knowledge management

productivity

How Personalized AI Assistants Remember My Preferences to Enhance Productivity

This article delves into how AI remembers preferences, the technology behind it, and the significant benefits it offers, particularly for professionals like financial advisors.

productivity

AI assistants

ChatGPT Remember Me: How Personalized Memory Enhances Your AI Conversations

This article delves into how ChatGPT's memory works, the benefits it offers to both users and businesses, and the privacy measures in place to protect user data.

ChatGPT

personalized memory

AI Assistant Long-Term Memory: Enhancing Persistent Context and Productivity

In this article, we will explore the mechanisms behind AI long-term memory, its benefits for businesses, and how Evermind AI's EverOS facilitates advanced memory management.

AI assistant

long-term memory

Personal AI with Memory: Enhancing AI Memory Management and Knowledge Retention

Readers will learn about the different types of memory architectures that support effective knowledge retention, the role of memory-augmented neural networks, and how Evermind AI's EverOS facilitates persistent memory in AI agents.

personal AI

AI memory

How to Add Memory to AI Agents: Understanding AI Agent Memory Architecture and Integration

The discussion will cover key types of memory in AI agents, including persistent and contextual memory, and how these types enhance AI performance.

AI agent

agent memory

Best Mem0 Alternatives for AI Agent Memory in 2026: A Comprehensive Comparison

In this comprehensive guide, we cover the top five alternatives to Mem0 in 2026, including Evermind.ai, Zep, Letta, Cognee, and Supermemory. We compare their core features, pricing structures, benchmark performance, ideal use cases, and target audiences to help you make an informed decision.

mem0

EverOS

Zep

Letta

Cognee

Supermemory

AI agent

agent memory

Best MemGPT Alternatives for AI Agent Memory in 2026: A Comprehensive Comparison

Whether you are looking to escape framework lock-in, reduce the high token costs of self-editing memory, or simply find a more reliable memory layer for production, this guide breaks down the best MemGPT alternatives available today.

MemGPT

EverOS

Best Letta Alternatives for AI Agent Memory in 2026: A Comprehensive Comparison

Whether you are encountering framework lock-in, struggling with the unpredictability of self-editing memory, or simply seeking higher benchmark performance, this guide breaks down the best alternatives to Letta in 2026.

Letta

EverOS

Zep

mem0

Congee

long-term memory

AI agent

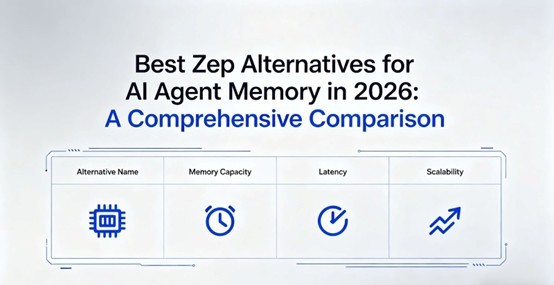

Best Zep Alternatives for AI Agent Memory in 2026: A Comprehensive Comparison

In this comprehensive guide, we explore the top alternatives to Zep in 2026—including Evermind.ai, Mem0, Letta, Cognee, and LangMem—comparing their architecture, benchmark performance, pricing, and ideal use cases to help you make an informed decision.

Zep

EverOS

AI agent

agent memory

Agent Memory Framework: Understanding AI Agent Memory Systems for Persistent Contextual Intelligence

In this article, we will explore the mechanisms behind AI agent memory systems, their significance in various applications, and how they can transform business operations. As organizations increasingly rely on AI solutions, understanding the agent memory framework becomes crucial for leveraging its full potential. We will delve into the workings of these systems, the role of cognitive architecture, and the specific benefits they offer, particularly in the financial advisory sector.

AI agent

EverOS

agent memory

Agent Memory in AI: How AI Agent Memory Management Enhances Contextual and Persistent AI Systems

This article will explore the concept of agent memory, its importance in AI, and how it can improve overall AI performance. Additionally, we will discuss the architecture that supports these memory systems and the various business applications of agent memory, particularly in enhancing productivity and service delivery. Finally, we will examine Evermind AI's proprietary operating system, EverOS, which is designed to optimize agent memory functionalities for enterprise applications.

agent memory

AI agents

EverOS