Introducing mRAG: How EverOS Retrieves What Actually Matters

Memory systems have a retrieval problem. Most of them find things that sound relevant to your query. EverOS mRAG finds things that are relevant — by going a level deeper than any standard search can reach.

This post explains what mRAG is, why it exists, and what it means for you and your agents.

The problem with how most memory retrieval works

When you ask a memory system something, the standard approach is to take your query, turn it into a vector, and find the stored summaries that are closest to it. Fast, simple, and — for complex questions — frequently wrong.

Here's why. The things you actually need are often buried inside a memory, not visible at the summary level. An episode summary might say "discussed project timelines and team allocation." The detail you need — "the team agreed that the Q2 deadline was unrealistic" — lives two levels down, in the atomic facts extracted from that conversation.

Standard retrieval never gets there. It finds the right neighbourhood but returns you the street address when you need the apartment number.

mRAG is built to fix this.

What mRAG does differently

mRAG stands for Multimodal RAG. The core idea is a hierarchical search: start with a coarse scan of episode summaries to find the right general territory, then expand into the precise atomic facts within those episodes — and use those facts to replace the coarser summaries in the final result.

In other words, mRAG treats retrieval as a two-level problem. Summaries are a map. Atomic facts are the territory. You navigate using the map, but you return the territory.

The result is a set of specific, verifiable, fine-grained facts that precisely answer your query — not a collection of vague summaries that approximately relate to it.

How it works: a five-phase pipeline

Phase 1: Coarse retrieval across episode summaries

mRAG starts by running two searches in parallel against your episode memory:

An embedding search — comparing the semantic meaning of your query against episode summaries, using cosine similarity

A BM25 keyword search — matching the actual words in your query against episode and summary text

The two sets of results are then fused into a single ranked list. By default, EverOS uses RRF (Reciprocal Rank Fusion), which combines ranking position from both searches without over-weighting either signal. An LR (logistic regression) fusion mode is also available for environments where a trained model can learn the optimal blend of signals.

This phase returns a rough shortlist: episodes that are probably relevant to your query.

Phase 2: Setting up the search structure

Before expanding into atomic facts, mRAG initialises two data structures:

A min-heap that holds the current top-N results. The heap makes it efficient to decide in O(1) time whether a new candidate is good enough to displace an existing one.

A max-heap over all the coarse results, ordered by relevance score, used to decide which episodes to expand next.

Think of it as: the min-heap is the answer you'd give right now, and the max-heap is the queue of episodes still waiting to be investigated.

Phase 3: Expansion — the core of mRAG

This is where mRAG does something no flat retrieval system does.

The pipeline processes the episode queue in batches. For each episode it pops, it retrieves the atomic facts associated with that episode — the specific, discrete statements extracted from that conversation or document — and scores them against your original query.

The scoring formula is:

final_score = α × fact_score + (1 - α) × episode_score

Where:

fact_score is how closely the atomic fact matches your query (logistic regression of cosine similarity and BM25)

episode_score is the propagated relevance of the parent episode (logistic regression of cosine similarity and BM25 from Phase 1)

α balances how much weight to give the fact itself versus the episode it came from (default: 0.5)

The critical design: when an atomic fact scores well enough to enter the top-N results, its parent episode is removed from that set. The precise fact replaces the coarse summary. You get the specific detail, not the container it was stored in.

The loop continues until either all episodes have been processed or there is no improvement across several consecutive rounds, which is a convergence check that prevents wasted computation when the results have already stabilized.

Phase 4: Building the output

Once the loop converges, the top-N set contains a mix of items:

Episodes that were relevant but whose facts didn't score higher

Atomic facts that displaced their parent episodes

Both are surfaced in the response. Everything is sorted by final score before being returned.

Phase 5: [Optional] Reranking

For use cases where precision is critical, mRAG supports an optional final reranking pass. A dedicated rerank model re-scores the Phase 4 output, applying deeper semantic understanding to produce the final ranked list.

This step is off by default as the five-phase pipeline alone already delivers strong precision. But it is available for high-value retrieval scenarios where the extra latency is worth it.

What comes back

A mRAG search response contains two things:

Episodes — the relevant memory records that held context for your query, where no atomic fact cleanly superseded them.

Facts — the precise, atomic statements extracted from memory that directly answer your query. Each fact carries its topic label, its parent memory ID, and its final relevance score.

For example, a query like "what did we decide about the Q2 deadline?" might return:

{ "episodes": [ { "id": "ep_002", "summary": "Team discussed project timeline and resource constraints", "score": 0.72, "atomic_facts": [ { "id": "fact_001", "atomic_fact": "The team agreed the Q2 deadline is unrealistic given current headcount", "topic_name": "Project timeline", "score": 0.91, "parent_episode_id": "ep_002" } ] } ], "profiles": [...], "raw_messages": [...], "agent_memory": {...}, "query": { "text": "...", "method": "hybrid", "filters_applied": {...} } }

mRAG returned both episodes and facts. Episodes provide broader knowledge, while facts provide more precise information.

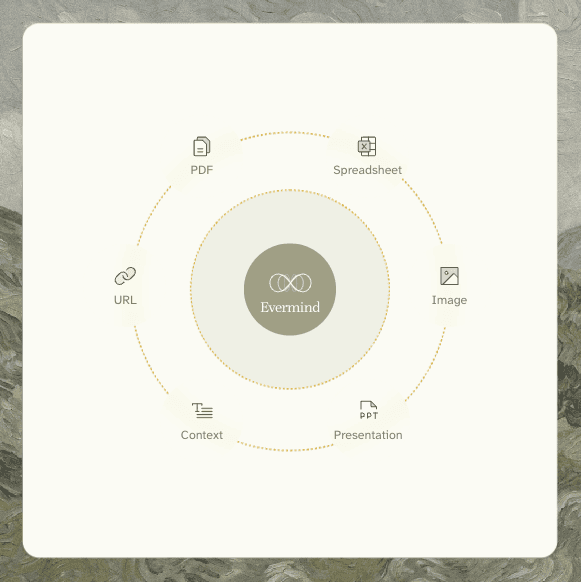

Works across all your content types

mRAG is data-type agnostic. The same pipeline retrieves across conversations, documents, emails, meeting notes, PDFs, spreadsheets, presentations, and images — because all of these are processed into the same Episodic Memory and AtomicFact structures at ingestion time.

Formats supported for multimodal parsing:

PDF (text-based and scanned)

Images (.png, .jpg, .jpeg, .webp, .tiff, .bmp, .svg)

Word documents (.docx, .doc)

Spreadsheets (.xlsx, .xls)

Presentations (.pptx, .ppt)

Email (.eml)

HTML (.html, .htm)

Formats extracted directly without parsing:

Plain text and Markdown (.txt, .md)

Subtitles and transcripts (.vtt)

Tabular plain text (.csv, .tsv)

Whether your relevant context lived in a conversation last Tuesday or in a PDF uploaded six months ago, mRAG retrieves across both with the same query, through the same endpoint.

How to use it

mRAG is available as a retrieval method on the EverOS search endpoint. Set "method": "hybrid" in your search request:

POST /api/v1/memories/search

{ "query": "what did we decide about the Q2 deadline?", "method": "hybrid", "filters": { "user_id": "user_123" }, "top_k": 10 }

The response will include both an episodes array and a facts array. For existing integrations, the change is backward-compatible: all non-mRAG methods return an empty facts field, so nothing breaks if you don't use it.

When to use mRAG vs other retrieval methods

EverOS offers several retrieval methods, each suited to different situations:

keyword — fast, exact-match retrieval. Good for when you know the specific term you're looking for.

vector — pure semantic embedding retrieval based on vector similarity. Good for open-ended, intent-driven queries where meaning matters more than exact words, and for finding contextually similar memories even when terminology differs.

agentic — iterative multi-hop retrieval using Memory Interleave. Good for complex, multi-step reasoning where the answer requires chaining evidence across multiple memories.

hybrid — the default. Hierarchical episode-to-fact retrieval, balancing efficiency and effectiveness. Best for queries where you need precision, where the answer is a specific fact rather than a general summary, and where your memory contains a mix of content types. Particularly strong for queries involving specific decisions, numbers, commitments, or details that tend to get lost inside broader summaries.

The performance picture

Internal benchmarks show the hierarchical approach of mRAG, which retrieves at the fact level rather than the episode level, consistently outperforms flat retrieval on queries that require specific detail. The highest-performing configuration retrieves using source-level context and returns facts, combining the broad coverage of the episode scan with the precision of atomic fact matching.

Flat chunk-based retrieval, by comparison, delivers lower accuracy on specific-detail queries and returns significantly less useful context per result.

All parameters that govern mRAG behavior, including the number of candidates retrieved at each stage, the score propagation weight, the convergence threshold, the reranker toggle, are configurable via environment variables. They are tuned at the system level and not exposed to individual API requests, which means you get strong default performance without configuration overhead.

What this means for your agents

For agents that need to reason about specific past events, e.g., commitments made in meetings, decisions recorded in documents, facts extracted from research, mRAG is the retrieval layer that makes that reasoning reliable.

An agent using standard retrieval might find that a conversation about a project deadline happened. An agent using mRAG finds that the Q2 deadline was slipped by six weeks, confirmed in the March 12 sync, because two engineers were reassigned.

The difference matters when your agent is advising on a project status, drafting a follow-up, or checking whether a prior commitment was kept.

Memory is only useful if retrieval is precise. That is what mRAG is built to do.

mRAG is available now on EverOS. To get started: everos.evermind.ai

Open-source repository: github.com/EverMind-AI/EverOS

Memory systems have a retrieval problem. Most of them find things that sound relevant to your query. EverOS mRAG finds things that are relevant — by going a level deeper than any standard search can reach.

This post explains what mRAG is, why it exists, and what it means for you and your agents.

The problem with how most memory retrieval works

When you ask a memory system something, the standard approach is to take your query, turn it into a vector, and find the stored summaries that are closest to it. Fast, simple, and — for complex questions — frequently wrong.

Here's why. The things you actually need are often buried inside a memory, not visible at the summary level. An episode summary might say "discussed project timelines and team allocation." The detail you need — "the team agreed that the Q2 deadline was unrealistic" — lives two levels down, in the atomic facts extracted from that conversation.

Standard retrieval never gets there. It finds the right neighbourhood but returns you the street address when you need the apartment number.

mRAG is built to fix this.

What mRAG does differently

mRAG stands for Multimodal RAG. The core idea is a hierarchical search: start with a coarse scan of episode summaries to find the right general territory, then expand into the precise atomic facts within those episodes — and use those facts to replace the coarser summaries in the final result.

In other words, mRAG treats retrieval as a two-level problem. Summaries are a map. Atomic facts are the territory. You navigate using the map, but you return the territory.

The result is a set of specific, verifiable, fine-grained facts that precisely answer your query — not a collection of vague summaries that approximately relate to it.

How it works: a five-phase pipeline

Phase 1: Coarse retrieval across episode summaries

mRAG starts by running two searches in parallel against your episode memory:

An embedding search — comparing the semantic meaning of your query against episode summaries, using cosine similarity

A BM25 keyword search — matching the actual words in your query against episode and summary text

The two sets of results are then fused into a single ranked list. By default, EverOS uses RRF (Reciprocal Rank Fusion), which combines ranking position from both searches without over-weighting either signal. An LR (logistic regression) fusion mode is also available for environments where a trained model can learn the optimal blend of signals.

This phase returns a rough shortlist: episodes that are probably relevant to your query.

Phase 2: Setting up the search structure

Before expanding into atomic facts, mRAG initialises two data structures:

A min-heap that holds the current top-N results. The heap makes it efficient to decide in O(1) time whether a new candidate is good enough to displace an existing one.

A max-heap over all the coarse results, ordered by relevance score, used to decide which episodes to expand next.

Think of it as: the min-heap is the answer you'd give right now, and the max-heap is the queue of episodes still waiting to be investigated.

Phase 3: Expansion — the core of mRAG

This is where mRAG does something no flat retrieval system does.

The pipeline processes the episode queue in batches. For each episode it pops, it retrieves the atomic facts associated with that episode — the specific, discrete statements extracted from that conversation or document — and scores them against your original query.

The scoring formula is:

final_score = α × fact_score + (1 - α) × episode_score

Where:

fact_score is how closely the atomic fact matches your query (logistic regression of cosine similarity and BM25)

episode_score is the propagated relevance of the parent episode (logistic regression of cosine similarity and BM25 from Phase 1)

α balances how much weight to give the fact itself versus the episode it came from (default: 0.5)

The critical design: when an atomic fact scores well enough to enter the top-N results, its parent episode is removed from that set. The precise fact replaces the coarse summary. You get the specific detail, not the container it was stored in.

The loop continues until either all episodes have been processed or there is no improvement across several consecutive rounds, which is a convergence check that prevents wasted computation when the results have already stabilized.

Phase 4: Building the output

Once the loop converges, the top-N set contains a mix of items:

Episodes that were relevant but whose facts didn't score higher

Atomic facts that displaced their parent episodes

Both are surfaced in the response. Everything is sorted by final score before being returned.

Phase 5: [Optional] Reranking

For use cases where precision is critical, mRAG supports an optional final reranking pass. A dedicated rerank model re-scores the Phase 4 output, applying deeper semantic understanding to produce the final ranked list.

This step is off by default as the five-phase pipeline alone already delivers strong precision. But it is available for high-value retrieval scenarios where the extra latency is worth it.

What comes back

A mRAG search response contains two things:

Episodes — the relevant memory records that held context for your query, where no atomic fact cleanly superseded them.

Facts — the precise, atomic statements extracted from memory that directly answer your query. Each fact carries its topic label, its parent memory ID, and its final relevance score.

For example, a query like "what did we decide about the Q2 deadline?" might return:

{ "episodes": [ { "id": "ep_002", "summary": "Team discussed project timeline and resource constraints", "score": 0.72, "atomic_facts": [ { "id": "fact_001", "atomic_fact": "The team agreed the Q2 deadline is unrealistic given current headcount", "topic_name": "Project timeline", "score": 0.91, "parent_episode_id": "ep_002" } ] } ], "profiles": [...], "raw_messages": [...], "agent_memory": {...}, "query": { "text": "...", "method": "hybrid", "filters_applied": {...} } }

mRAG returned both episodes and facts. Episodes provide broader knowledge, while facts provide more precise information.

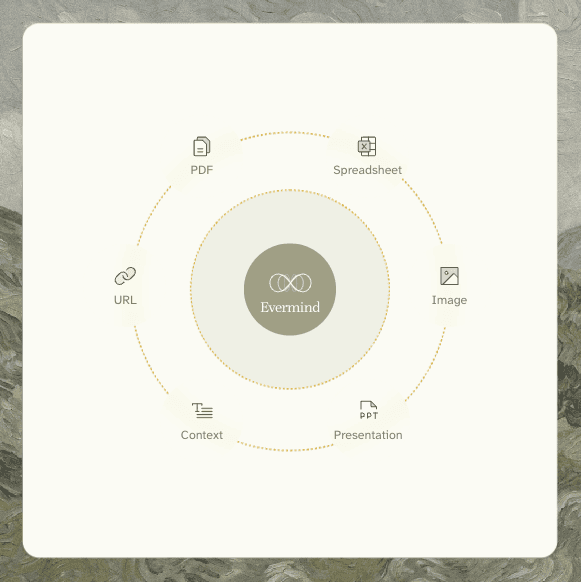

Works across all your content types

mRAG is data-type agnostic. The same pipeline retrieves across conversations, documents, emails, meeting notes, PDFs, spreadsheets, presentations, and images — because all of these are processed into the same Episodic Memory and AtomicFact structures at ingestion time.

Formats supported for multimodal parsing:

PDF (text-based and scanned)

Images (.png, .jpg, .jpeg, .webp, .tiff, .bmp, .svg)

Word documents (.docx, .doc)

Spreadsheets (.xlsx, .xls)

Presentations (.pptx, .ppt)

Email (.eml)

HTML (.html, .htm)

Formats extracted directly without parsing:

Plain text and Markdown (.txt, .md)

Subtitles and transcripts (.vtt)

Tabular plain text (.csv, .tsv)

Whether your relevant context lived in a conversation last Tuesday or in a PDF uploaded six months ago, mRAG retrieves across both with the same query, through the same endpoint.

How to use it

mRAG is available as a retrieval method on the EverOS search endpoint. Set "method": "hybrid" in your search request:

POST /api/v1/memories/search

{ "query": "what did we decide about the Q2 deadline?", "method": "hybrid", "filters": { "user_id": "user_123" }, "top_k": 10 }

The response will include both an episodes array and a facts array. For existing integrations, the change is backward-compatible: all non-mRAG methods return an empty facts field, so nothing breaks if you don't use it.

When to use mRAG vs other retrieval methods

EverOS offers several retrieval methods, each suited to different situations:

keyword — fast, exact-match retrieval. Good for when you know the specific term you're looking for.

vector — pure semantic embedding retrieval based on vector similarity. Good for open-ended, intent-driven queries where meaning matters more than exact words, and for finding contextually similar memories even when terminology differs.

agentic — iterative multi-hop retrieval using Memory Interleave. Good for complex, multi-step reasoning where the answer requires chaining evidence across multiple memories.

hybrid — the default. Hierarchical episode-to-fact retrieval, balancing efficiency and effectiveness. Best for queries where you need precision, where the answer is a specific fact rather than a general summary, and where your memory contains a mix of content types. Particularly strong for queries involving specific decisions, numbers, commitments, or details that tend to get lost inside broader summaries.

The performance picture

Internal benchmarks show the hierarchical approach of mRAG, which retrieves at the fact level rather than the episode level, consistently outperforms flat retrieval on queries that require specific detail. The highest-performing configuration retrieves using source-level context and returns facts, combining the broad coverage of the episode scan with the precision of atomic fact matching.

Flat chunk-based retrieval, by comparison, delivers lower accuracy on specific-detail queries and returns significantly less useful context per result.

All parameters that govern mRAG behavior, including the number of candidates retrieved at each stage, the score propagation weight, the convergence threshold, the reranker toggle, are configurable via environment variables. They are tuned at the system level and not exposed to individual API requests, which means you get strong default performance without configuration overhead.

What this means for your agents

For agents that need to reason about specific past events, e.g., commitments made in meetings, decisions recorded in documents, facts extracted from research, mRAG is the retrieval layer that makes that reasoning reliable.

An agent using standard retrieval might find that a conversation about a project deadline happened. An agent using mRAG finds that the Q2 deadline was slipped by six weeks, confirmed in the March 12 sync, because two engineers were reassigned.

The difference matters when your agent is advising on a project status, drafting a follow-up, or checking whether a prior commitment was kept.

Memory is only useful if retrieval is precise. That is what mRAG is built to do.

mRAG is available now on EverOS. To get started: everos.evermind.ai

Open-source repository: github.com/EverMind-AI/EverOS

Memory systems have a retrieval problem. Most of them find things that sound relevant to your query. EverOS mRAG finds things that are relevant — by going a level deeper than any standard search can reach.

This post explains what mRAG is, why it exists, and what it means for you and your agents.

The problem with how most memory retrieval works

When you ask a memory system something, the standard approach is to take your query, turn it into a vector, and find the stored summaries that are closest to it. Fast, simple, and — for complex questions — frequently wrong.

Here's why. The things you actually need are often buried inside a memory, not visible at the summary level. An episode summary might say "discussed project timelines and team allocation." The detail you need — "the team agreed that the Q2 deadline was unrealistic" — lives two levels down, in the atomic facts extracted from that conversation.

Standard retrieval never gets there. It finds the right neighbourhood but returns you the street address when you need the apartment number.

mRAG is built to fix this.

What mRAG does differently

mRAG stands for Multimodal RAG. The core idea is a hierarchical search: start with a coarse scan of episode summaries to find the right general territory, then expand into the precise atomic facts within those episodes — and use those facts to replace the coarser summaries in the final result.

In other words, mRAG treats retrieval as a two-level problem. Summaries are a map. Atomic facts are the territory. You navigate using the map, but you return the territory.

The result is a set of specific, verifiable, fine-grained facts that precisely answer your query — not a collection of vague summaries that approximately relate to it.

How it works: a five-phase pipeline

Phase 1: Coarse retrieval across episode summaries

mRAG starts by running two searches in parallel against your episode memory:

An embedding search — comparing the semantic meaning of your query against episode summaries, using cosine similarity

A BM25 keyword search — matching the actual words in your query against episode and summary text

The two sets of results are then fused into a single ranked list. By default, EverOS uses RRF (Reciprocal Rank Fusion), which combines ranking position from both searches without over-weighting either signal. An LR (logistic regression) fusion mode is also available for environments where a trained model can learn the optimal blend of signals.

This phase returns a rough shortlist: episodes that are probably relevant to your query.

Phase 2: Setting up the search structure

Before expanding into atomic facts, mRAG initialises two data structures:

A min-heap that holds the current top-N results. The heap makes it efficient to decide in O(1) time whether a new candidate is good enough to displace an existing one.

A max-heap over all the coarse results, ordered by relevance score, used to decide which episodes to expand next.

Think of it as: the min-heap is the answer you'd give right now, and the max-heap is the queue of episodes still waiting to be investigated.

Phase 3: Expansion — the core of mRAG

This is where mRAG does something no flat retrieval system does.

The pipeline processes the episode queue in batches. For each episode it pops, it retrieves the atomic facts associated with that episode — the specific, discrete statements extracted from that conversation or document — and scores them against your original query.

The scoring formula is:

final_score = α × fact_score + (1 - α) × episode_score

Where:

fact_score is how closely the atomic fact matches your query (logistic regression of cosine similarity and BM25)

episode_score is the propagated relevance of the parent episode (logistic regression of cosine similarity and BM25 from Phase 1)

α balances how much weight to give the fact itself versus the episode it came from (default: 0.5)

The critical design: when an atomic fact scores well enough to enter the top-N results, its parent episode is removed from that set. The precise fact replaces the coarse summary. You get the specific detail, not the container it was stored in.

The loop continues until either all episodes have been processed or there is no improvement across several consecutive rounds, which is a convergence check that prevents wasted computation when the results have already stabilized.

Phase 4: Building the output

Once the loop converges, the top-N set contains a mix of items:

Episodes that were relevant but whose facts didn't score higher

Atomic facts that displaced their parent episodes

Both are surfaced in the response. Everything is sorted by final score before being returned.

Phase 5: [Optional] Reranking

For use cases where precision is critical, mRAG supports an optional final reranking pass. A dedicated rerank model re-scores the Phase 4 output, applying deeper semantic understanding to produce the final ranked list.

This step is off by default as the five-phase pipeline alone already delivers strong precision. But it is available for high-value retrieval scenarios where the extra latency is worth it.

What comes back

A mRAG search response contains two things:

Episodes — the relevant memory records that held context for your query, where no atomic fact cleanly superseded them.

Facts — the precise, atomic statements extracted from memory that directly answer your query. Each fact carries its topic label, its parent memory ID, and its final relevance score.

For example, a query like "what did we decide about the Q2 deadline?" might return:

{ "episodes": [ { "id": "ep_002", "summary": "Team discussed project timeline and resource constraints", "score": 0.72, "atomic_facts": [ { "id": "fact_001", "atomic_fact": "The team agreed the Q2 deadline is unrealistic given current headcount", "topic_name": "Project timeline", "score": 0.91, "parent_episode_id": "ep_002" } ] } ], "profiles": [...], "raw_messages": [...], "agent_memory": {...}, "query": { "text": "...", "method": "hybrid", "filters_applied": {...} } }

mRAG returned both episodes and facts. Episodes provide broader knowledge, while facts provide more precise information.

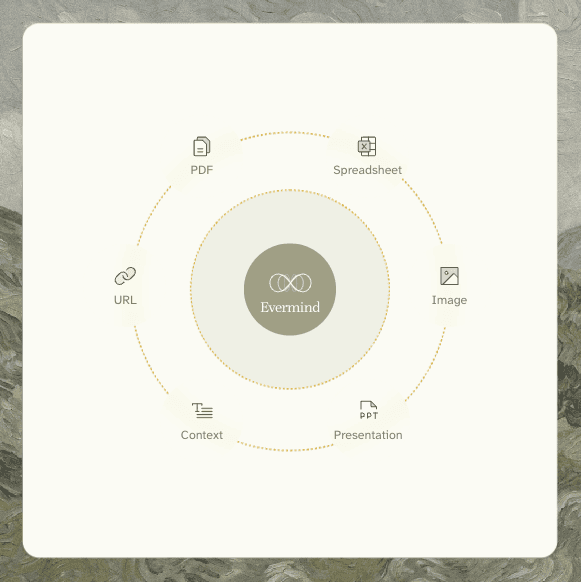

Works across all your content types

mRAG is data-type agnostic. The same pipeline retrieves across conversations, documents, emails, meeting notes, PDFs, spreadsheets, presentations, and images — because all of these are processed into the same Episodic Memory and AtomicFact structures at ingestion time.

Formats supported for multimodal parsing:

PDF (text-based and scanned)

Images (.png, .jpg, .jpeg, .webp, .tiff, .bmp, .svg)

Word documents (.docx, .doc)

Spreadsheets (.xlsx, .xls)

Presentations (.pptx, .ppt)

Email (.eml)

HTML (.html, .htm)

Formats extracted directly without parsing:

Plain text and Markdown (.txt, .md)

Subtitles and transcripts (.vtt)

Tabular plain text (.csv, .tsv)

Whether your relevant context lived in a conversation last Tuesday or in a PDF uploaded six months ago, mRAG retrieves across both with the same query, through the same endpoint.

How to use it

mRAG is available as a retrieval method on the EverOS search endpoint. Set "method": "hybrid" in your search request:

POST /api/v1/memories/search

{ "query": "what did we decide about the Q2 deadline?", "method": "hybrid", "filters": { "user_id": "user_123" }, "top_k": 10 }

The response will include both an episodes array and a facts array. For existing integrations, the change is backward-compatible: all non-mRAG methods return an empty facts field, so nothing breaks if you don't use it.

When to use mRAG vs other retrieval methods

EverOS offers several retrieval methods, each suited to different situations:

keyword — fast, exact-match retrieval. Good for when you know the specific term you're looking for.

vector — pure semantic embedding retrieval based on vector similarity. Good for open-ended, intent-driven queries where meaning matters more than exact words, and for finding contextually similar memories even when terminology differs.

agentic — iterative multi-hop retrieval using Memory Interleave. Good for complex, multi-step reasoning where the answer requires chaining evidence across multiple memories.

hybrid — the default. Hierarchical episode-to-fact retrieval, balancing efficiency and effectiveness. Best for queries where you need precision, where the answer is a specific fact rather than a general summary, and where your memory contains a mix of content types. Particularly strong for queries involving specific decisions, numbers, commitments, or details that tend to get lost inside broader summaries.

The performance picture

Internal benchmarks show the hierarchical approach of mRAG, which retrieves at the fact level rather than the episode level, consistently outperforms flat retrieval on queries that require specific detail. The highest-performing configuration retrieves using source-level context and returns facts, combining the broad coverage of the episode scan with the precision of atomic fact matching.

Flat chunk-based retrieval, by comparison, delivers lower accuracy on specific-detail queries and returns significantly less useful context per result.

All parameters that govern mRAG behavior, including the number of candidates retrieved at each stage, the score propagation weight, the convergence threshold, the reranker toggle, are configurable via environment variables. They are tuned at the system level and not exposed to individual API requests, which means you get strong default performance without configuration overhead.

What this means for your agents

For agents that need to reason about specific past events, e.g., commitments made in meetings, decisions recorded in documents, facts extracted from research, mRAG is the retrieval layer that makes that reasoning reliable.

An agent using standard retrieval might find that a conversation about a project deadline happened. An agent using mRAG finds that the Q2 deadline was slipped by six weeks, confirmed in the March 12 sync, because two engineers were reassigned.

The difference matters when your agent is advising on a project status, drafting a follow-up, or checking whether a prior commitment was kept.

Memory is only useful if retrieval is precise. That is what mRAG is built to do.

mRAG is available now on EverOS. To get started: everos.evermind.ai

Open-source repository: github.com/EverMind-AI/EverOS