You may also like these

Related

Introducing mRAG: How EverOS Retrieves What Actually Matters

mRAG, multimodal, multimodal retrieval, RAG

Introducing Self-Evolving Agent Memory: How EverOS Helps Your AI Agents Learn from Experience

Self-Evolving Agent Memory, Agent Memory, Self-Evolving, Agent Skills, Agent Cases

Breaking the 100M Token Limit: MSA Architecture Achieves Efficient End-to-End Long-Term Memory for LLMs

long term memory, RAG, context, ai agent, OpenClaw, sparse attention, transformers, LLM, KV cache

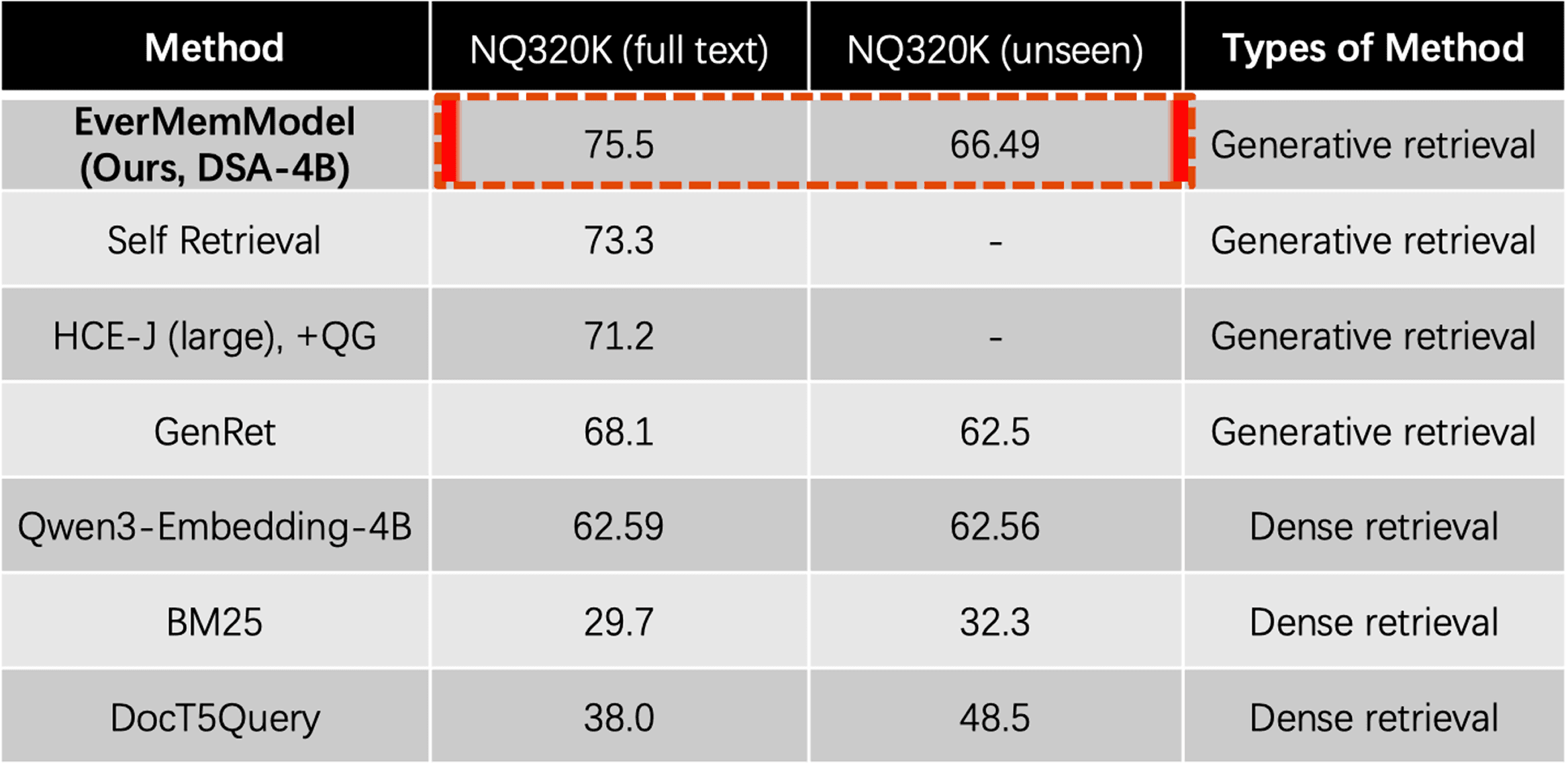

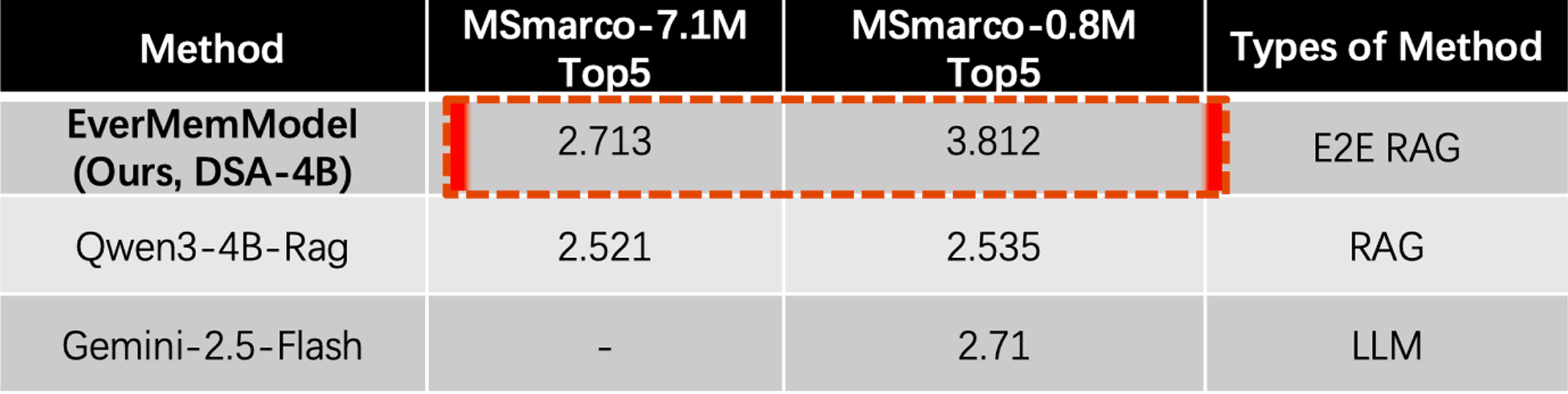

EverOS: SOTA Results Across Four Memory Benchmarks and What It Means for LLM Agents

EverOS, long term memory, RAG, context, LoCoMo, LongMemEval, PersonaMem